Business communication education evolves rapidly, adapting to five generations' needs and preparing students for future workplaces.

In the ever-evolving landscape of higher education, few areas are experiencing as rapid and profound changes as business communication. As we stand at the crossroads of five generations – from Baby Boomers to Generation Alpha – the way we teach and learn business communication is undergoing a seismic shift. This article explores how generational changes are reshaping business communication education at the college level and offers insights on how educators can adapt to meet the needs of today's diverse student body and prepare them for the future of work.

The Generational Spectrum: Understanding Our Students

To effectively teach business communication, we must first understand the diverse generations in our classrooms. Baby Boomers and Gen X, who often return to education for career advancement, bring a wealth of experience and a preference for traditional communication methods. Millennials, straddling the divide between analog and digital, value authenticity and purpose in their communication. Gen Z, our current traditional-age students, are true digital natives who prioritize efficiency, visual communication, and social consciousness.

As we look to the future, Generation Alpha – those born after 2013 – will soon enter our lecture halls, bringing with them an innate understanding of technology that will further transform our teaching methods. This generational diversity presents both challenges and opportunities for educators. Balancing the needs and preferences of different generations while keeping the curriculum relevant and up-to-date is a significant challenge. However, this diversity also offers rich opportunities for cross-generational learning and collaboration.

Shifting Values and Communication Preferences

The values and priorities of each generation significantly impact their approach to business communication. While Baby Boomers and Gen X often emphasize formal communication structures and hierarchical respect, Millennials and Gen Z prioritize authenticity, inclusivity, and purpose-driven communication.

This shift is reflected in the transition from traditional methods like memos and formal letters to more dynamic, visual, and concise forms of communication. Infographics, video presentations, and social media posts are becoming as important as well-crafted emails in the business world. As educators, we must adapt our curriculum to reflect these changing preferences while still maintaining the core principles of effective communication.

Technology: The Great Enabler and Disruptor

The rapid advancement of technology has perhaps been the most significant factor in reshaping business communication education. Today's students expect seamless integration of digital tools in their learning experience. From collaborative platforms like Slack and Microsoft Teams to emerging technologies like augmented and virtual reality, the classroom is expanding beyond physical boundaries.

Educators must not only teach these tools but also instill an understanding of digital communication etiquette and best practices. The challenge lies in balancing the teaching of timeless communication principles with the ever-changing landscape of digital platforms. This balance is crucial in preparing students for a workplace where technological fluency is as important as traditional communication skills.

Emerging Technologies in Business Communication

As we look to the future, it's crucial to discuss emerging tools that are shaping the landscape of business communication. Artificial Intelligence is revolutionizing various aspects of communication, from AI-powered writing assistants to chatbots handling customer service inquiries. Virtual and Augmented Reality are transforming remote collaboration and presentation skills, offering immersive experiences that bridge the gap between physical and digital workspaces.

Blockchain technology is also making its mark, offering potential solutions for secure and transparent communication in business. As educators, we must stay abreast of these developments and incorporate them into our curriculum, ensuring our students are prepared for the technological realities of the modern workplace.

Personalized Learning and AI Integration

One way to address the diverse learning styles and preferences across generations is through personalized learning. Leveraging AI-powered platforms allows educators to customize the learning experience for each student based on their strengths, challenges, and communication preferences. By using adaptive learning technologies, educators can deliver content that resonates with Baby Boomers' preference for formal, structured communication while simultaneously catering to Gen Z's preference for interactive and visual tools.

MyLab course offers personalized learning with an eText, simulations, adaptive modules, assessments, case studies, and self-reflection tools. It provides immediate feedback, engages visual learners, and fosters comprehension through interactive features.

Integrating AI-driven learning platforms like Pearson's MyLab, which offers personalized study plans, could bridge gaps in generational learning preferences. These platforms also help provide real-time feedback, enhancing the adaptability of students' communication skills across generations and platforms.

Economic Realities and the Demand for Practical Skills

The economic uncertainties faced by Millennials and Gen Z have led to an increased focus on practical, job-ready skills in business communication education. Students are seeking courses that offer tangible benefits in the job market, such as effective remote work communication, digital collaboration, and data visualization.

This shift necessitates a more hands-on, experiential approach to teaching. Case studies, real-world projects, and industry partnerships are becoming essential components of effective business communication courses. By providing students with opportunities to apply their skills in real-world scenarios, we better prepare them for the challenges they'll face in their careers.

The Importance of Resilience and Lifelong Learning

Resilience is becoming a crucial skill for future workplaces, and students across generations must be equipped to handle rapid technological and societal changes. Educators should focus on fostering a growth mindset and lifelong learning, ensuring that students from all generational backgrounds are prepared to evolve with the times.

Introducing discussions or case studies around how businesses have adapted to major communication shifts (such as the shift to remote work during the pandemic) and the role that resilience and flexibility have played can be particularly effective. Encouraging students to see these challenges as learning opportunities will foster adaptability across generations, a skill that will serve them well throughout their careers.

The Global Perspective: Communicating Across Cultures

As businesses become increasingly global, the ability to communicate effectively across cultures is more critical than ever. Business communication education must now incorporate lessons on cross-cultural communication, global business etiquette, and the nuances of international virtual collaboration. This global perspective is essential in preparing students for a workplace where they may be communicating with colleagues and clients from around the world on a daily basis.

Neurodiversity and Inclusive Communication

An often overlooked aspect of business communication education is addressing neurodiversity. As our understanding of different cognitive styles grows, it's becoming increasingly important to teach inclusive communication strategies. This includes educating students about different communication preferences and needs, and providing techniques for effective communication with neurodiverse colleagues and clients.

Moreover, introducing assistive technologies that support communication for neurodiverse individuals in the workplace can help create a more inclusive and effective communication environment. By addressing neurodiversity in our curriculum, we prepare students to be more empathetic and adaptable communicators, ready to thrive in diverse workplace environments.

Sustainability and Environmental Consciousness in Business Communication

With the growing emphasis on sustainability in the business world, it's crucial to incorporate this aspect into business communication education. This includes teaching students about green communication practices and the environmental impact of different communication methods. Students should be prepared to effectively communicate a company's sustainability initiatives to various stakeholders.

Additionally, raising awareness about the digital carbon footprint of communication technologies and teaching strategies to minimize this impact is becoming increasingly important. By integrating sustainability into our curriculum, we prepare students to be responsible communicators in an environmentally conscious business landscape.

Data Privacy and Security in Communication

In an era where data breaches are becoming more common, understanding data privacy and security in communication is crucial. Business communication education should cover key data protection regulations like GDPR and their impact on business communication. Teaching secure communication practices, including encryption and safe file sharing, is essential.

Moreover, students should be prepared to handle crisis communication in the event of a data security incident. This knowledge not only makes students more valuable to potential employers but also prepares them to navigate the complex landscape of digital communication responsibly.

Measuring Communication Effectiveness

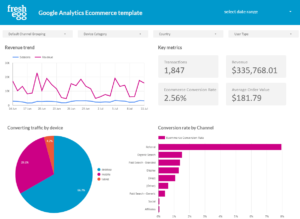

As the business world becomes increasingly data-driven, it's important to introduce students to methods of measuring communication effectiveness. This includes teaching about Key Performance Indicators (KPIs) for communication and how to use analytics tools to derive actionable insights from communication data.

Introducing concepts like A/B testing in business communication can help students understand how to optimize their communication strategies based on data. This quantitative approach to communication complements the qualitative skills traditionally taught in business communication courses, preparing students for a workplace where data-driven decision making is increasingly valued.

Key Takeaways

The landscape of business communication is changing rapidly, driven by generational shifts and technological advancements. As educators, our role is to bridge the gap between traditional business communication principles and the evolving needs of the modern workplace. By embracing these changes and adapting our teaching methods, we can prepare students of all generations to communicate effectively in the diverse, dynamic, and digital world of business.

The future of business communication education lies not in resisting change, but in harnessing the unique strengths of each generation to create a rich, diverse, and effective learning environment. As we navigate these shifts, we have the opportunity to shape not just the future of education, but the future of business communication itself.

To navigate these generational shifts successfully, business communication educators must embrace a flexible and adaptive approach. This includes implementing blended learning strategies, focusing on adaptability, emphasizing soft skills, staying current with industry trends, encouraging practical application of skills, and promoting ethical communication practices.

By addressing emerging technologies, neurodiversity, sustainability, data privacy, and quantitative analysis in our curriculum, we ensure that our students are well-rounded communicators prepared for the complexities of the modern business world. As we move forward, let us embrace the challenge of educating across generations, seeing it not as an obstacle, but as an opportunity to enrich our teaching and better prepare our students for the future of work.

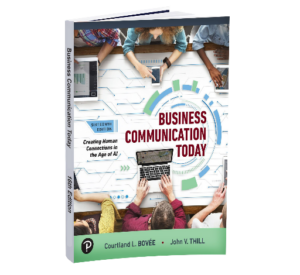

Why Business Communication Today is the Optimal Textbook for Navigating Generational Shifts in Business Communication Education

Based on insights from Navigating Generational Shifts in Business Communication Education, Business Communication Today stands out as the ideal textbook for instructors preparing students across generations for the evolving workplace.

1. Catering to a Diverse Student Body Across Generations

Business Communication Today is designed to accommodate the learning preferences of students from multiple generations. Whether students favor traditional business writing or modern approaches such as infographics, video presentations, and interactive content, the textbook offers a flexible framework that bridges generational gaps. Its balanced content ensures that Baby Boomers, Gen X, Millennials, Gen Z, and the emerging Gen Alpha can all find relevant and engaging material suited to their learning styles.

2. Integration of Technology and Digital Tools

The textbook seamlessly incorporates discussions on digital communication tools such as Slack, Microsoft Teams, AI-powered platforms, and virtual collaboration technologies. As highlighted in the article, these tools are reshaping both the workplace and the classroom. Business Communication Today ensures students develop the digital fluency required to navigate modern communication channels professionally.

3. Focus on Practical, Job-Ready Skills

Today’s students demand hands-on, career-ready skills. Business Communication Today integrates case studies, real-world scenarios, and project-based learning to help students master key workplace competencies. From digital collaboration and remote work communication to data visualization and professional networking, the textbook equips students with practical skills they can apply immediately in professional settings.

4. Adaptability to a Rapidly Changing Workplace

While grounded in core communication principles, Business Communication Today remains highly adaptable to technological advancements. The text covers both traditional business etiquette and the evolving nature of communication tools, helping students build the resilience and adaptability needed to thrive in a fast-changing digital business environment.

5. Emphasis on Ethical and Inclusive Communication

The textbook goes beyond technical communication skills, emphasizing ethical and inclusive business communication. It provides guidance on cross-cultural interactions, neurodiverse communication strategies, and responsible messaging—essential skills in today’s diverse and globalized business world. This ensures students are prepared to engage thoughtfully with a wide range of audiences and stakeholders.

6. Supporting Personalized and Blended Learning Approaches

Business Communication Today integrates seamlessly with digital learning platforms such as Pearson’s MyLab, enabling personalized learning paths and real-time feedback. This adaptive approach caters to both traditional learners and digital-native students, ensuring an inclusive and customized learning experience.

7. Preparing Students for Lifelong Learning and Sustainability

In addition to communication fundamentals, the textbook fosters a growth mindset, encouraging students to embrace lifelong learning—a key factor in career success. It also incorporates discussions on sustainability and responsible communication, aligning with modern students’ increasing focus on ethical business practices and environmental impact.

A Textbook Built for the Future of Business Communication

Business Communication Today directly addresses the challenges and opportunities created by generational shifts in education and the workplace. Its modern, adaptable, and practical approach makes it the perfect choice for instructors who want to equip their students with the tools to succeed in the evolving world of business communication.

ow Business Communication Today Solves Key Instructional Challenges

ow Business Communication Today Solves Key Instructional Challenges